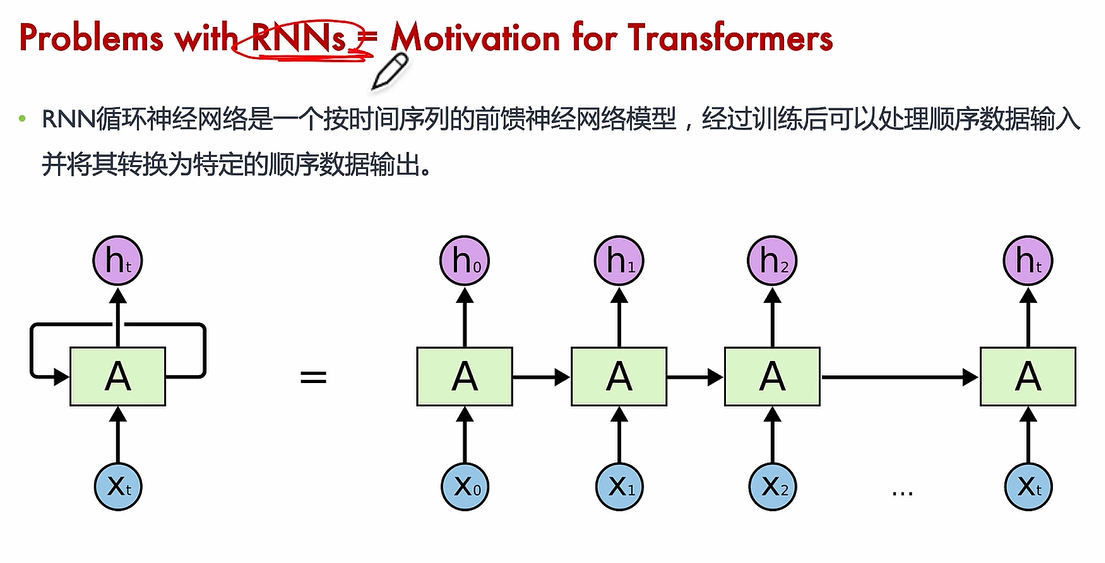

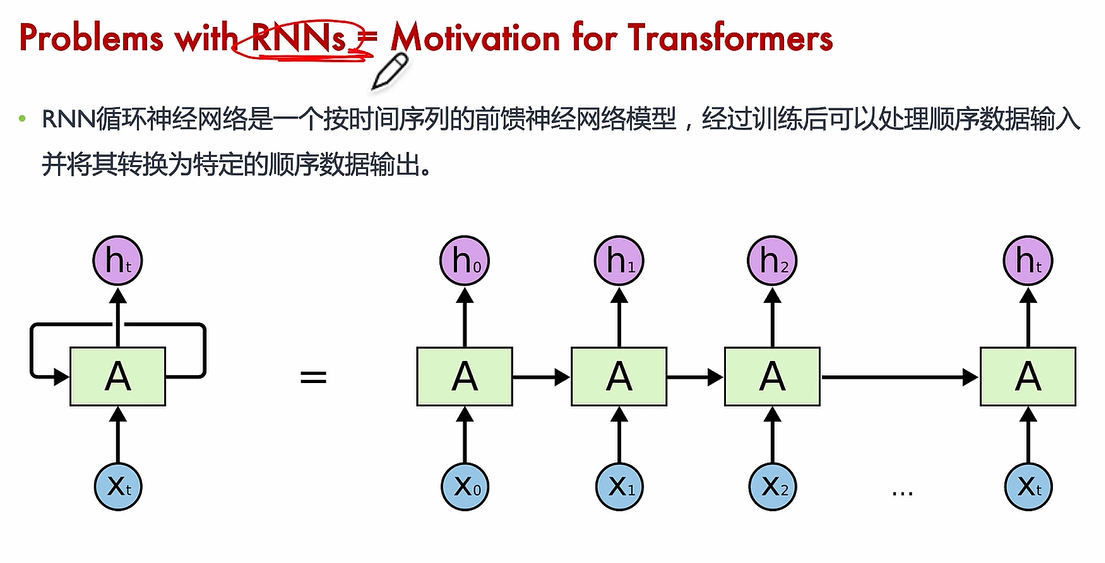

Motivation

slow to train

short memory

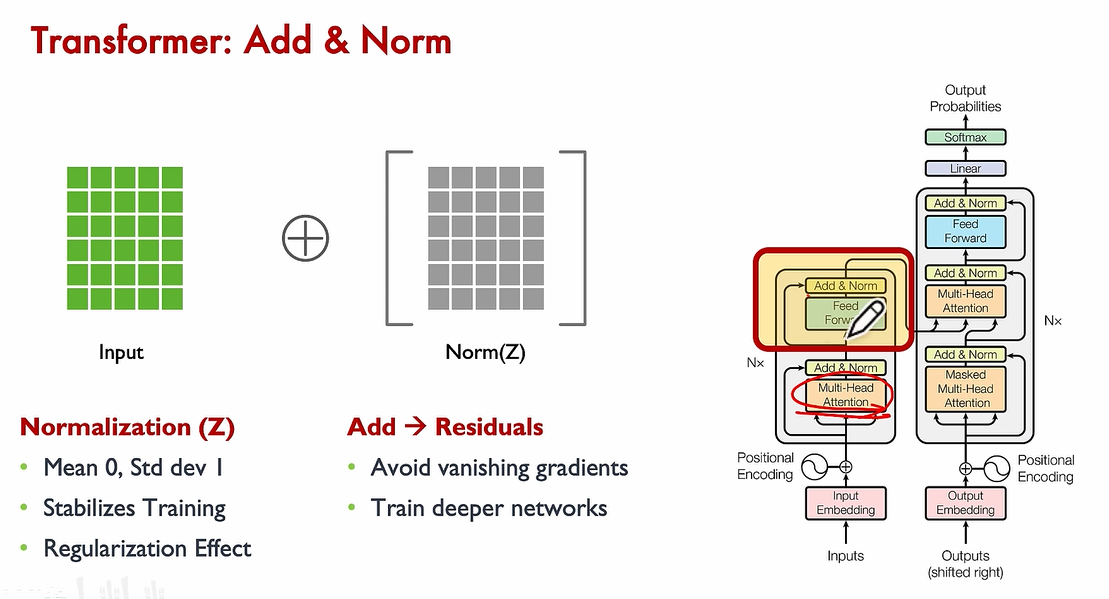

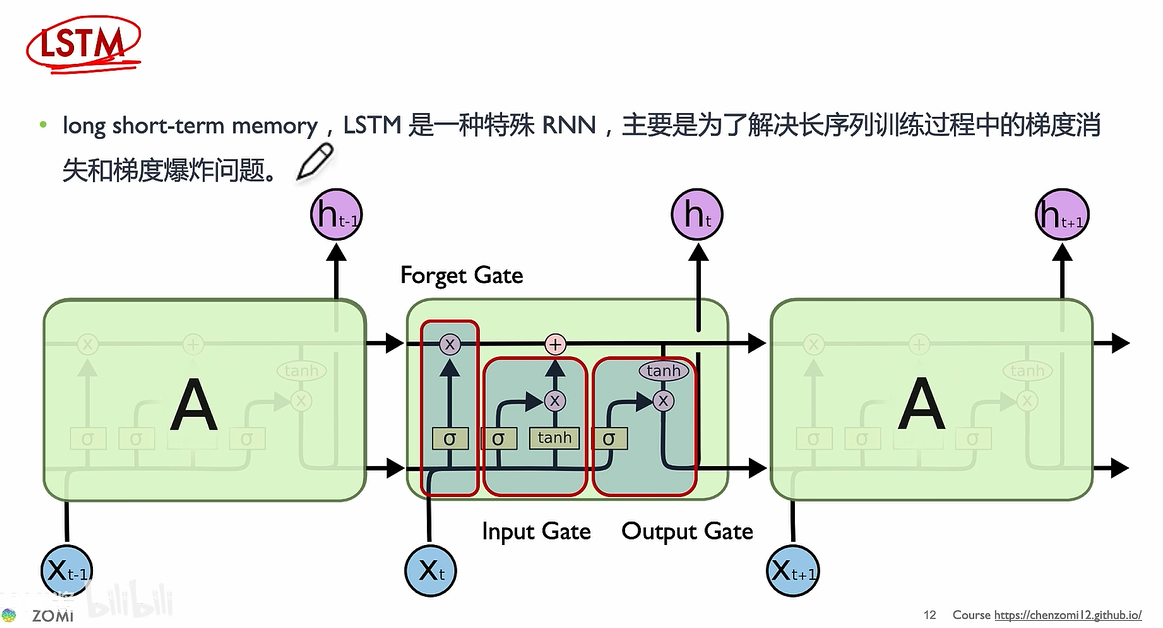

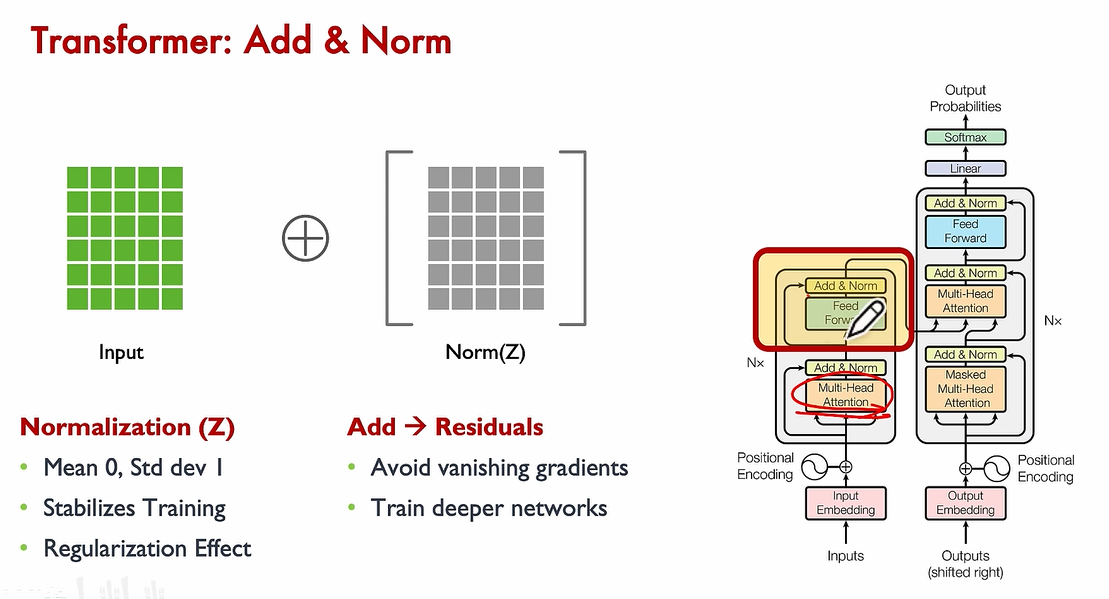

vanishing gradient

slower to train

short memory

vanishing gradient

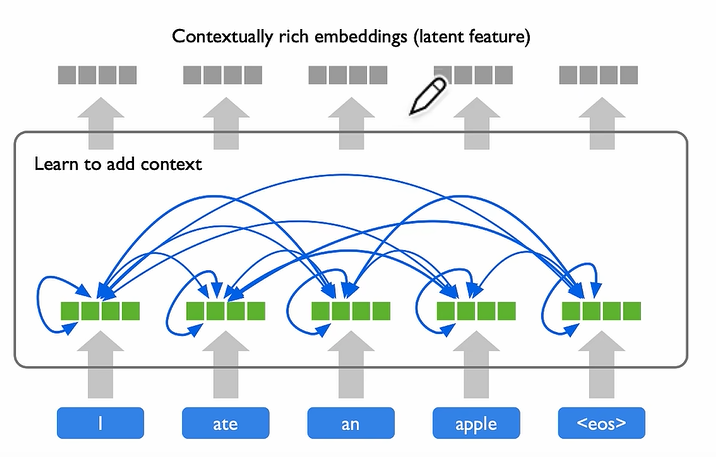

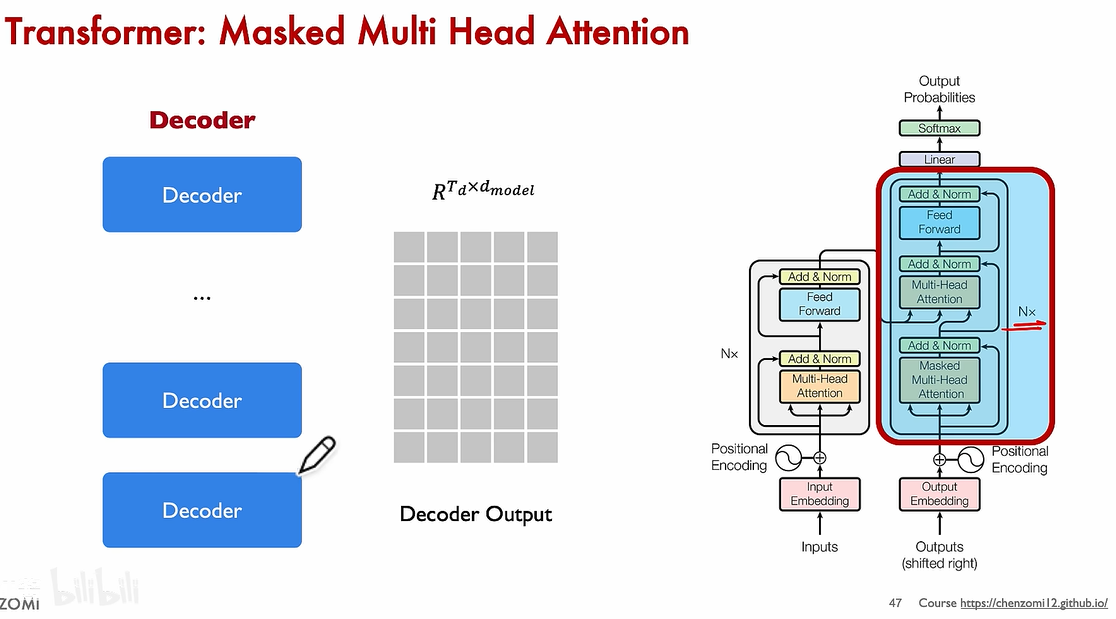

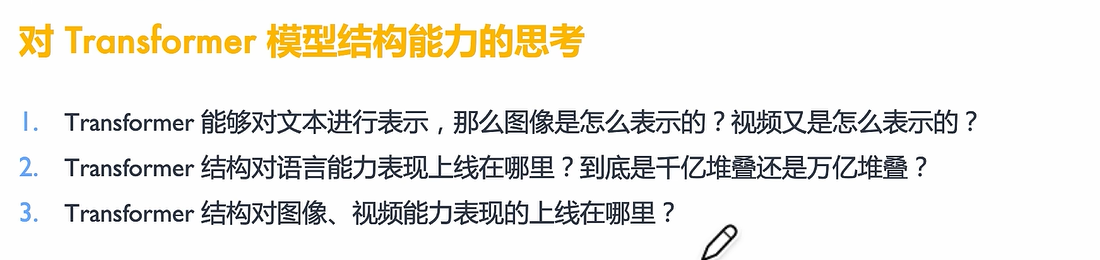

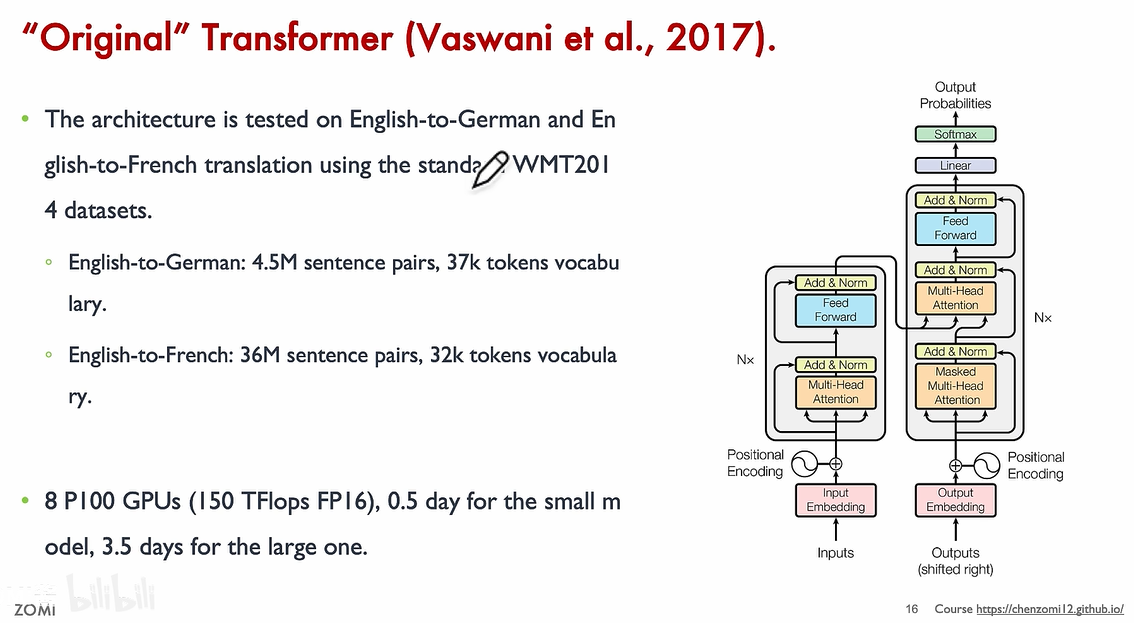

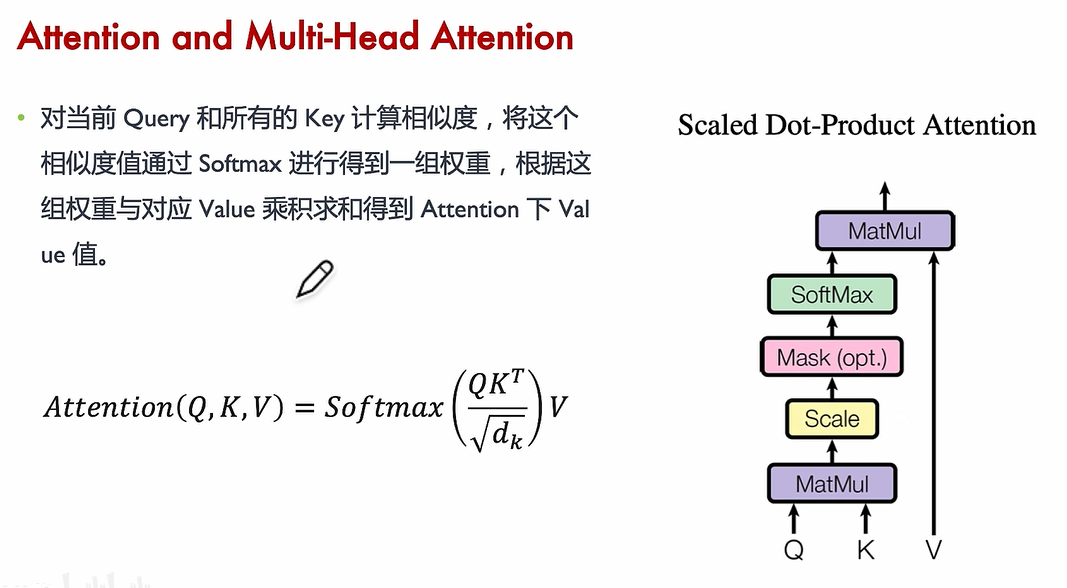

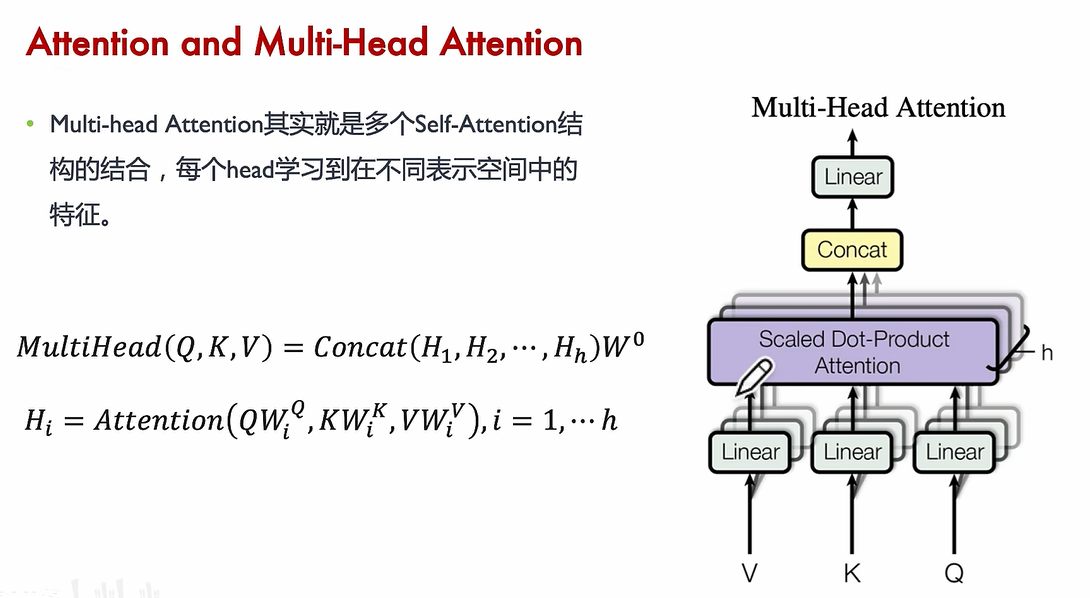

Transformer Attention

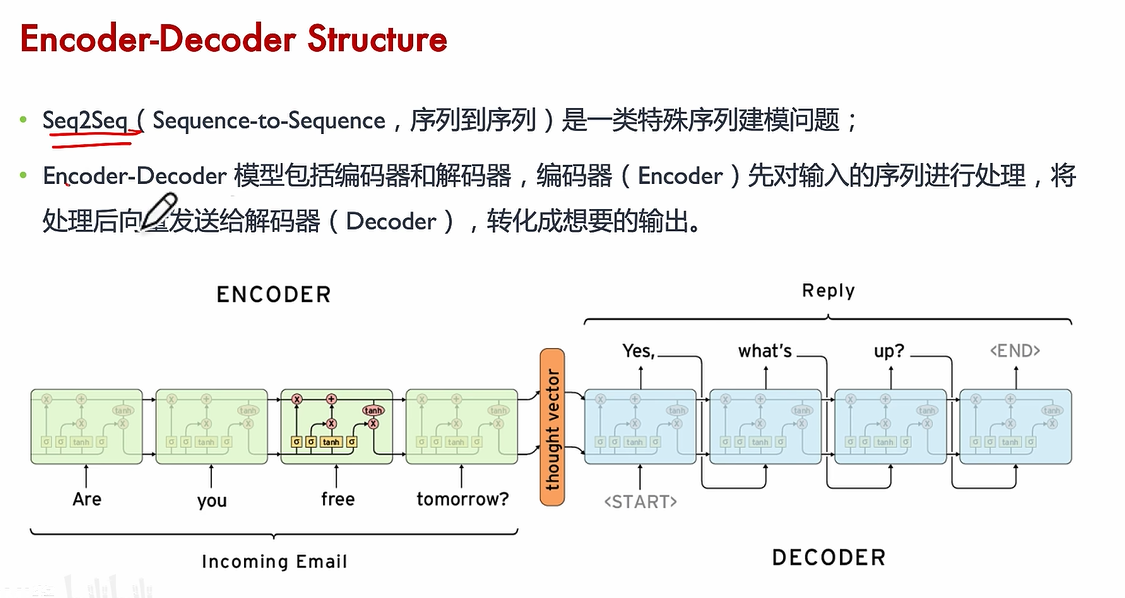

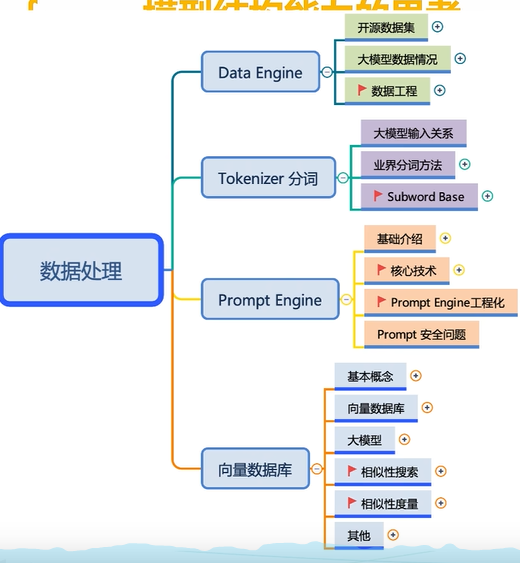

任务:机器翻译

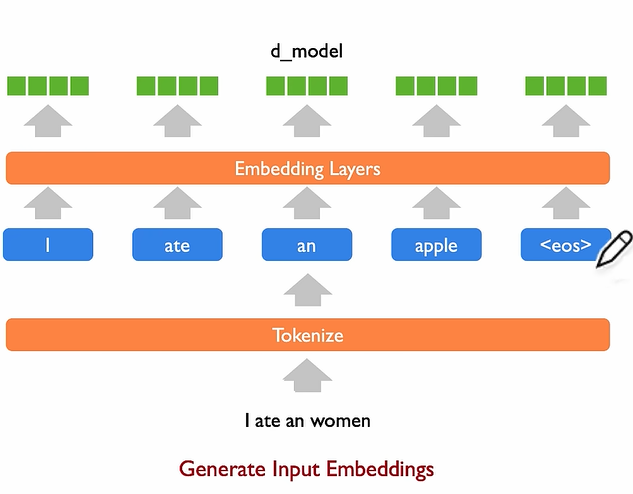

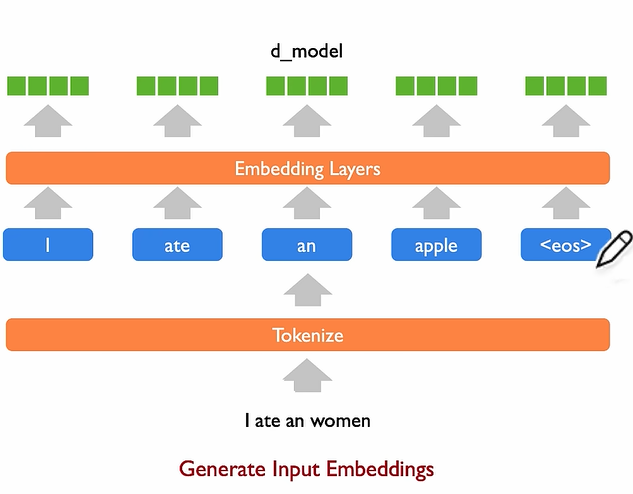

input

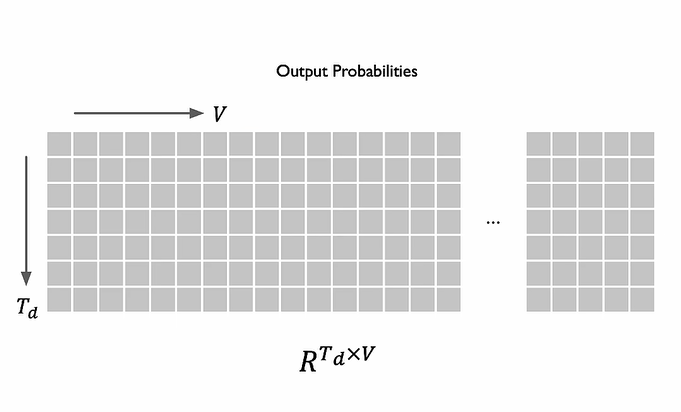

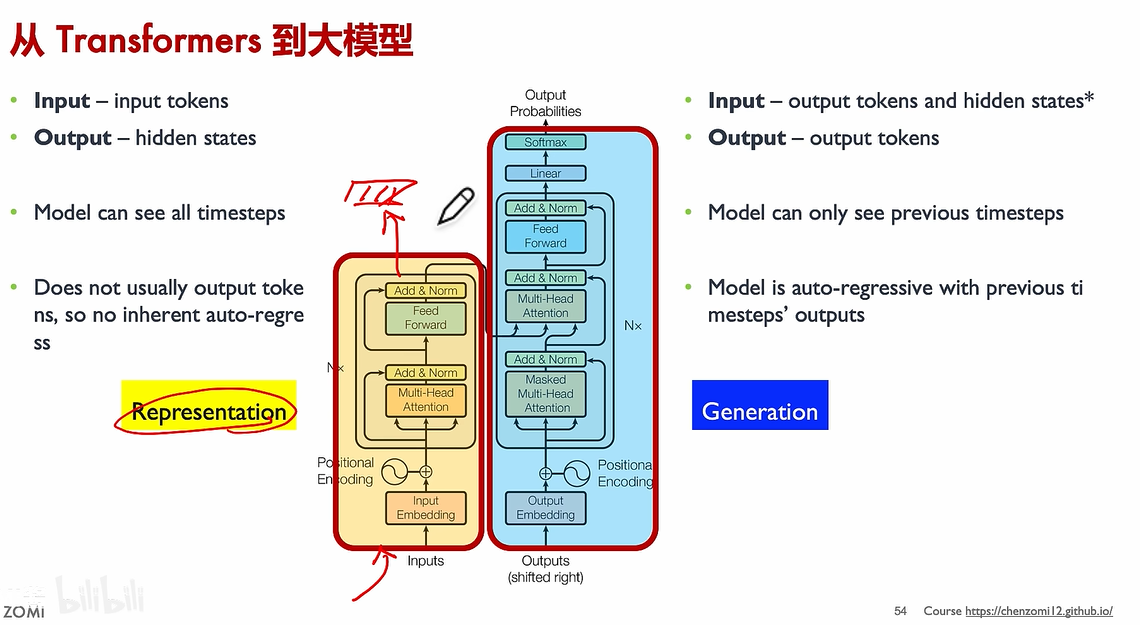

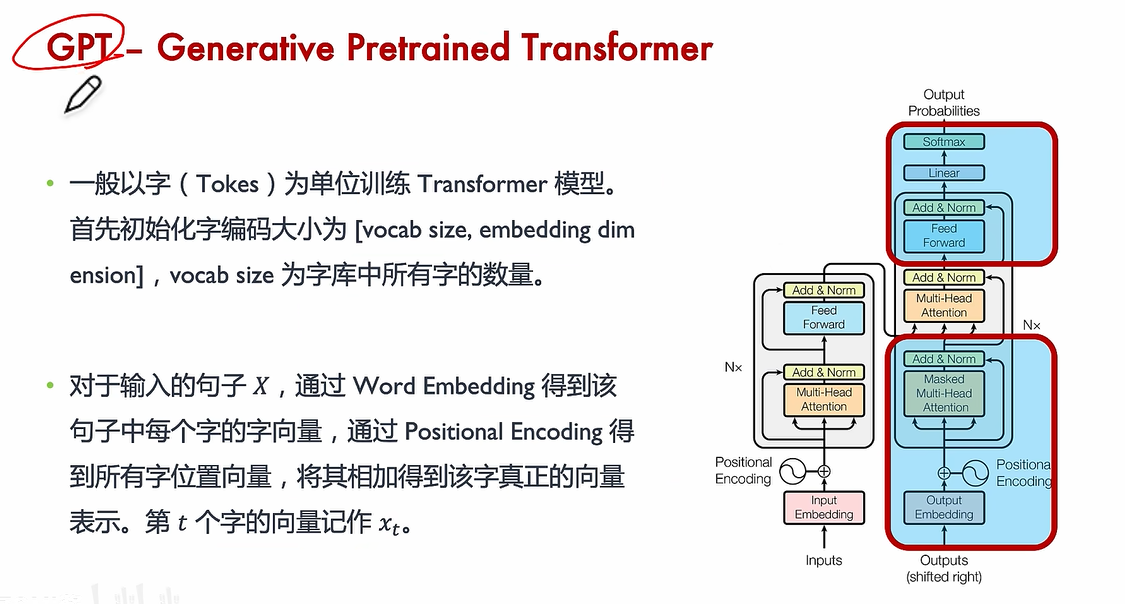

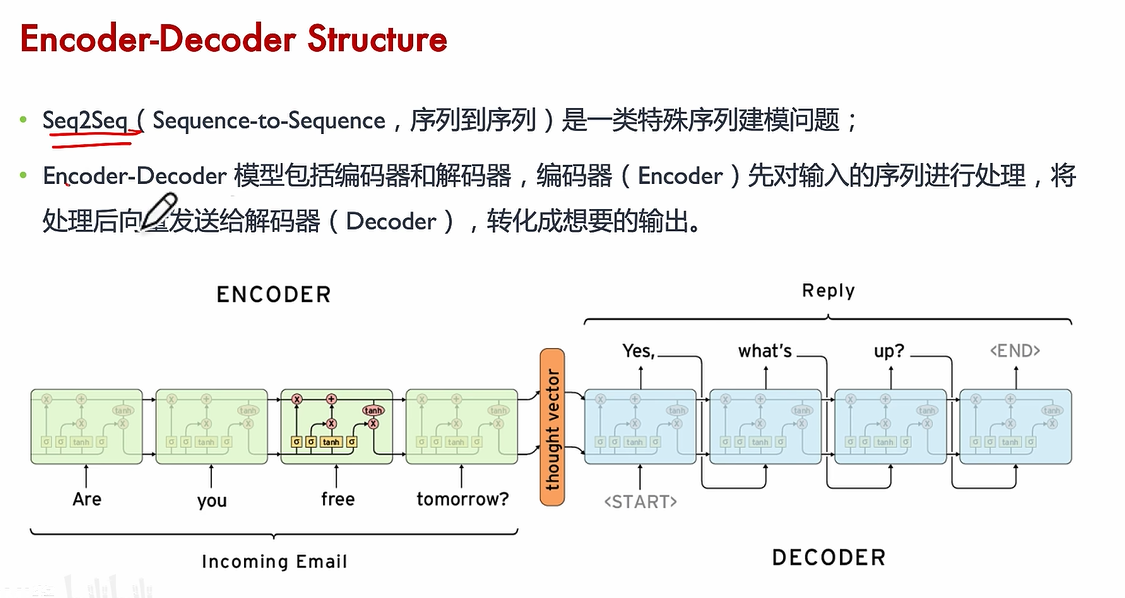

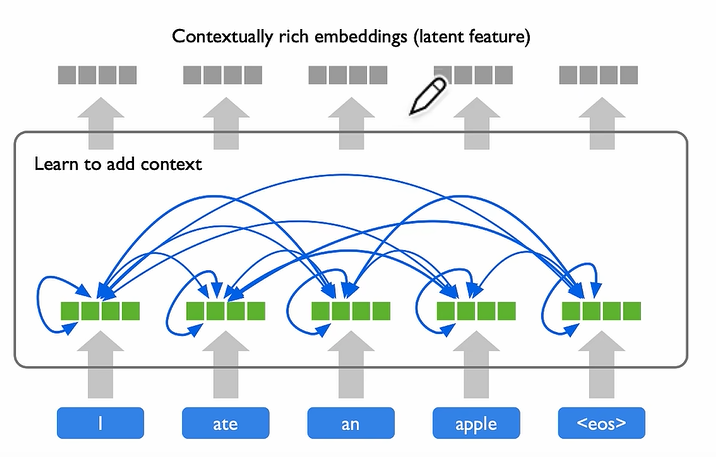

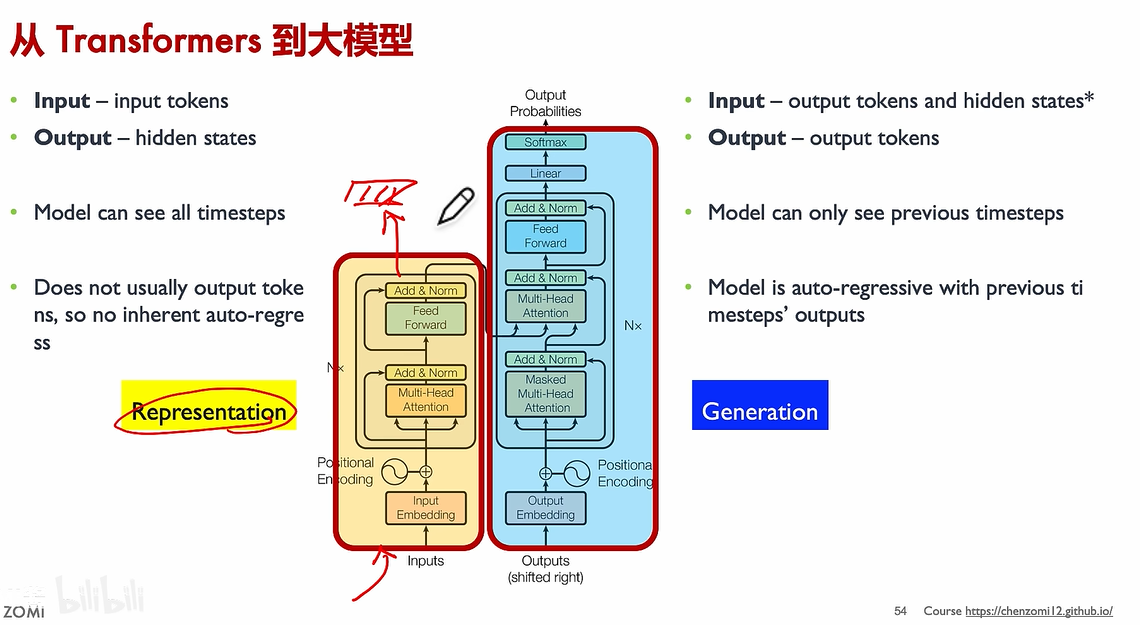

Encoder

to → hidden layer

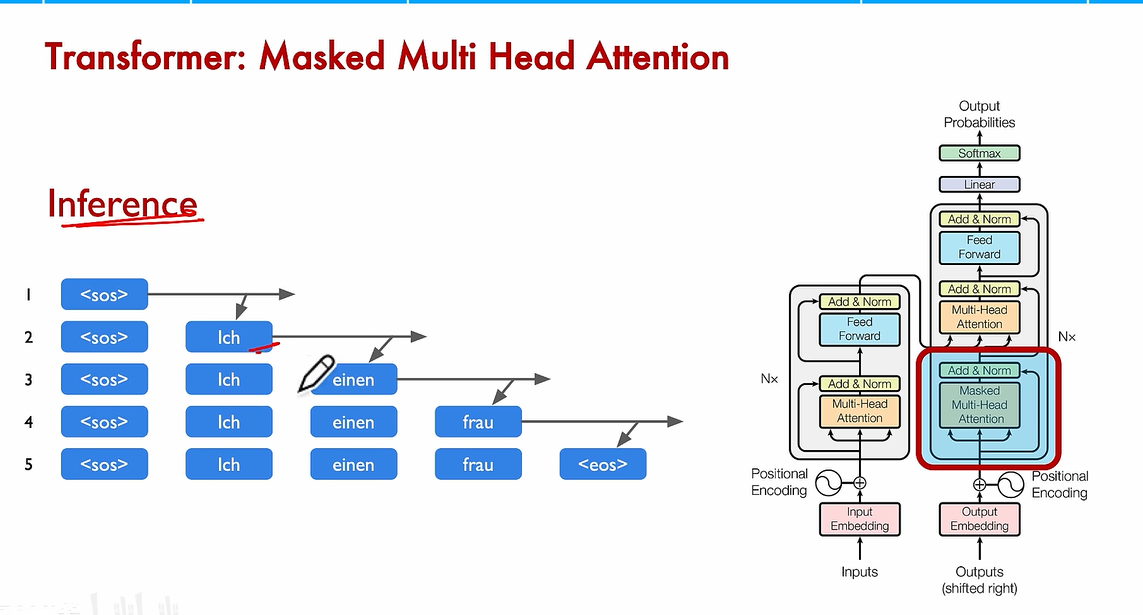

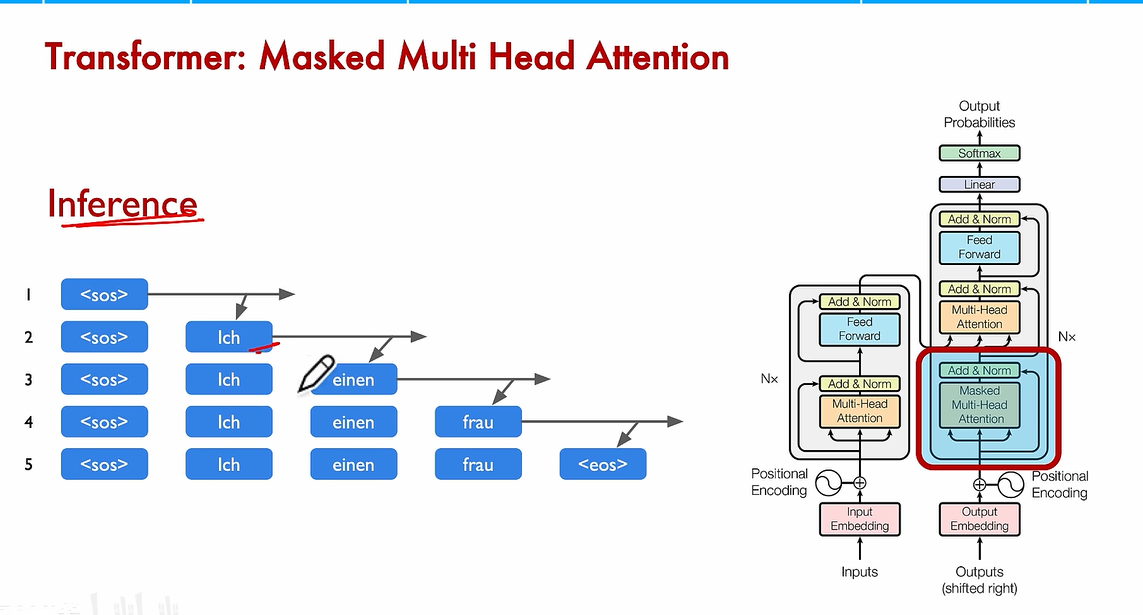

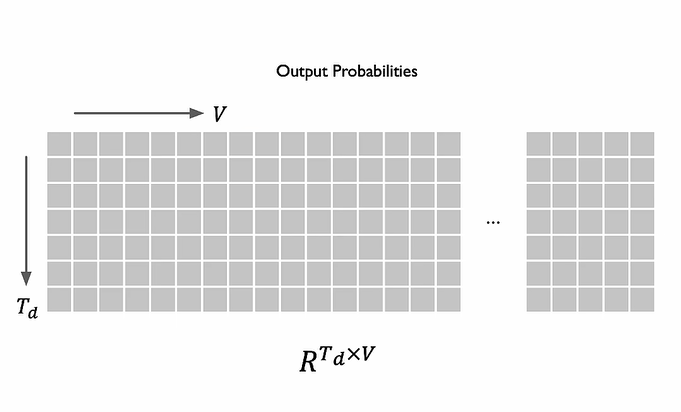

inference

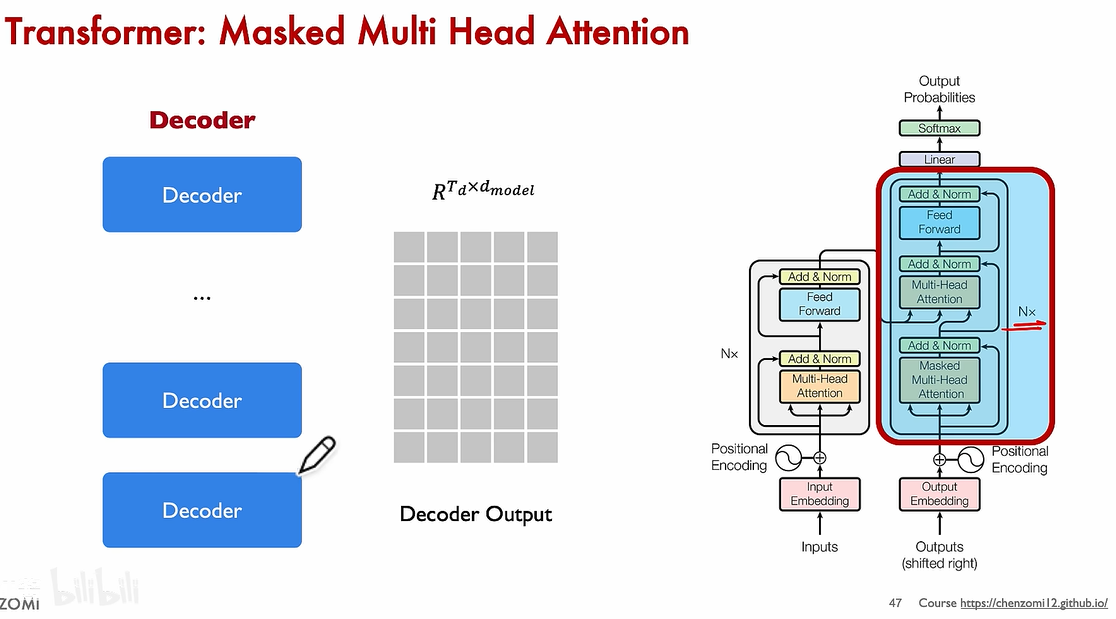

Decoder

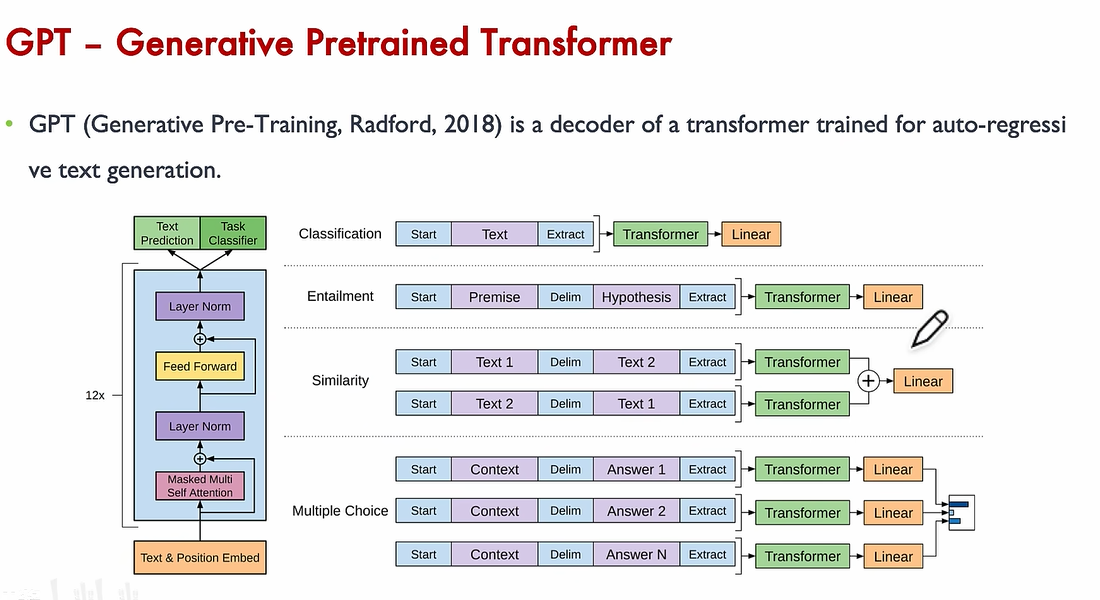

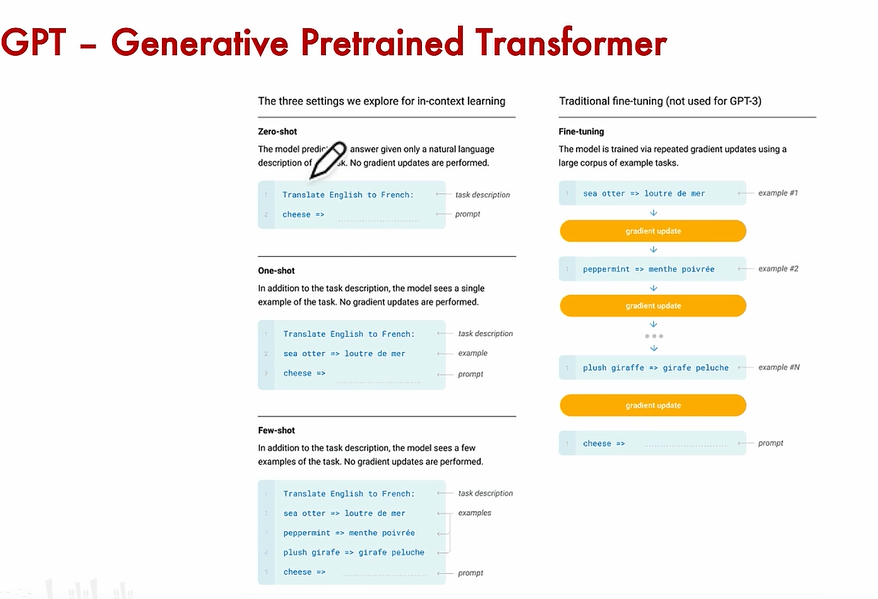

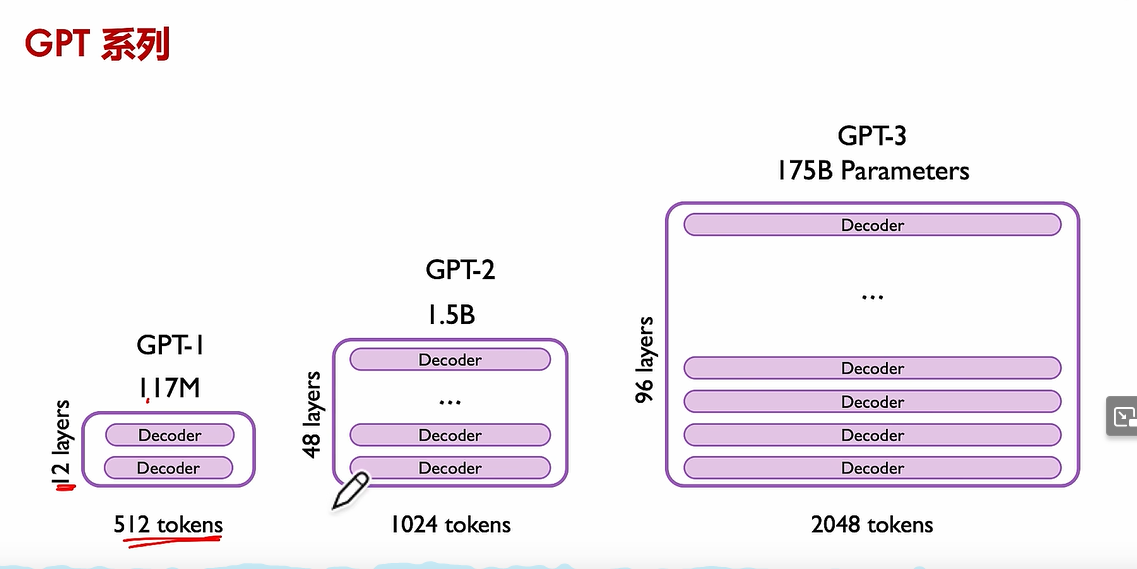

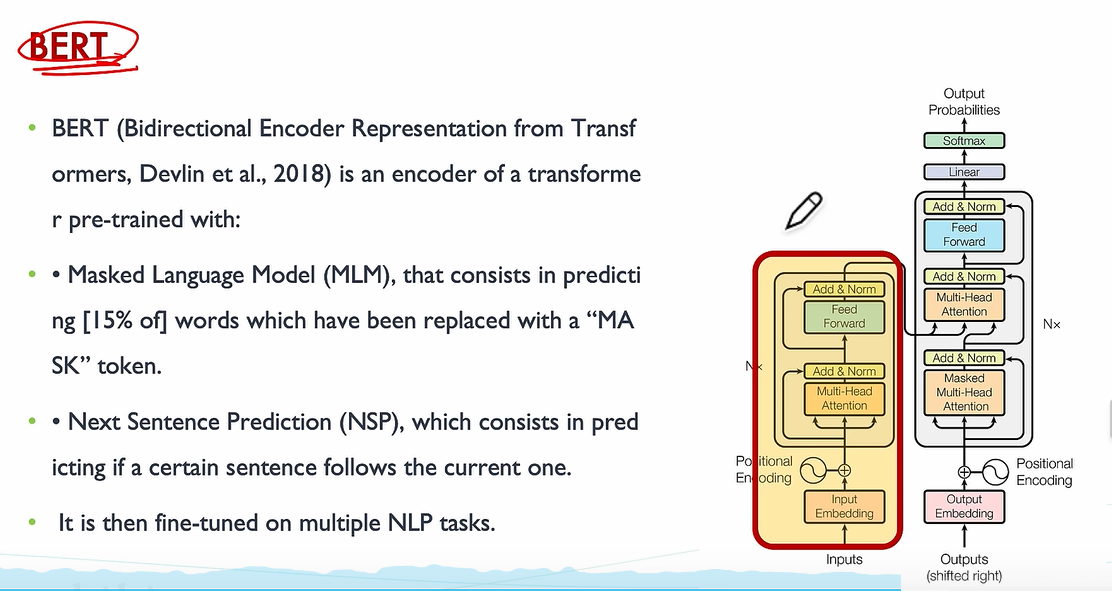

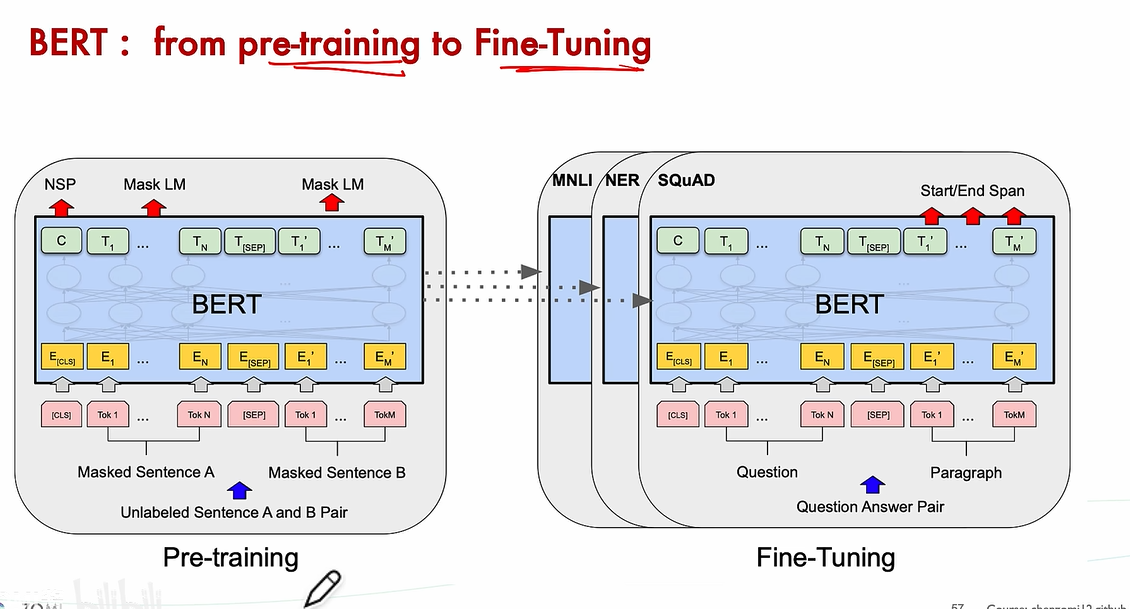

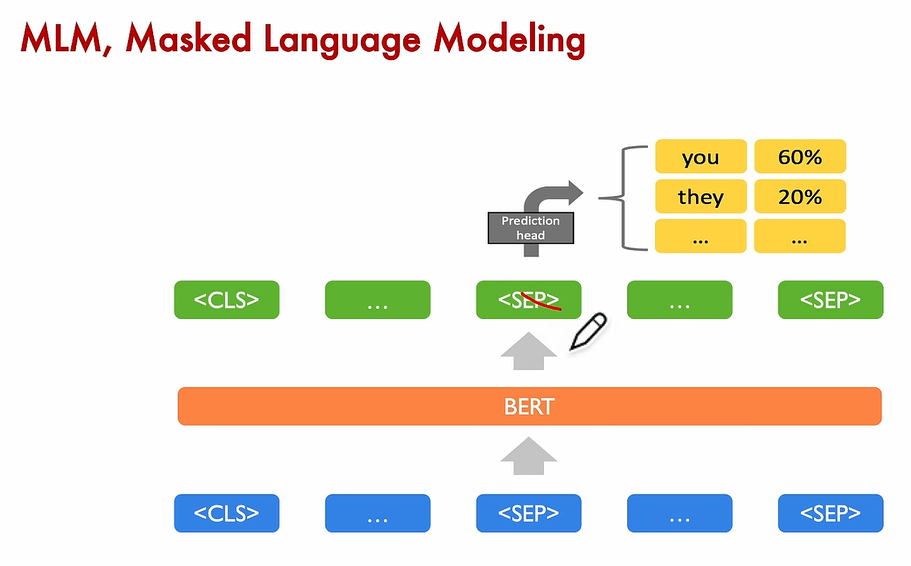

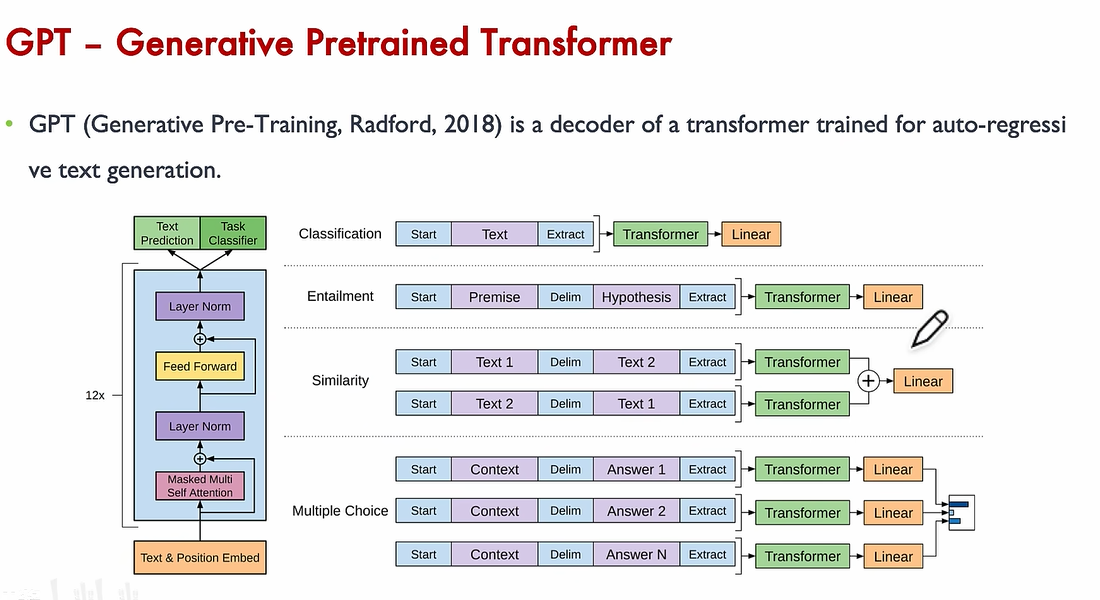

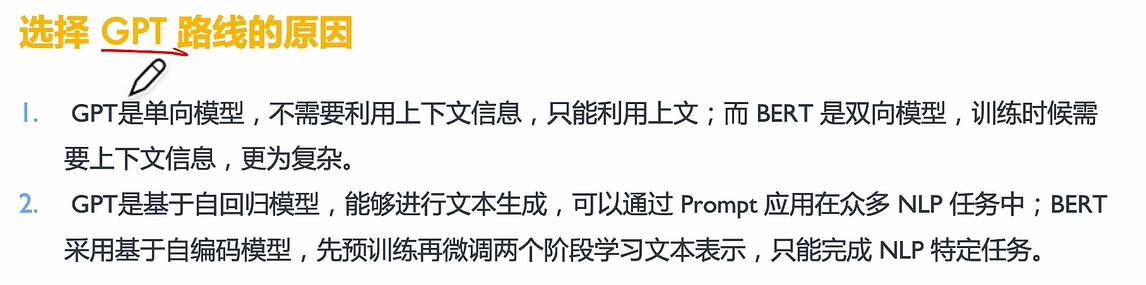

BERT Vs GPT

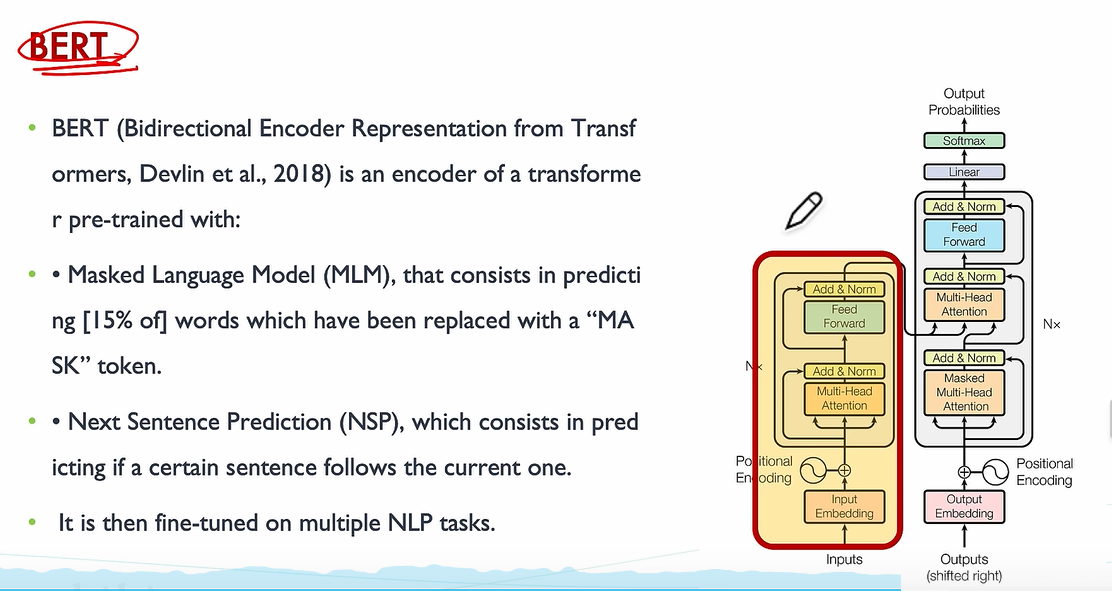

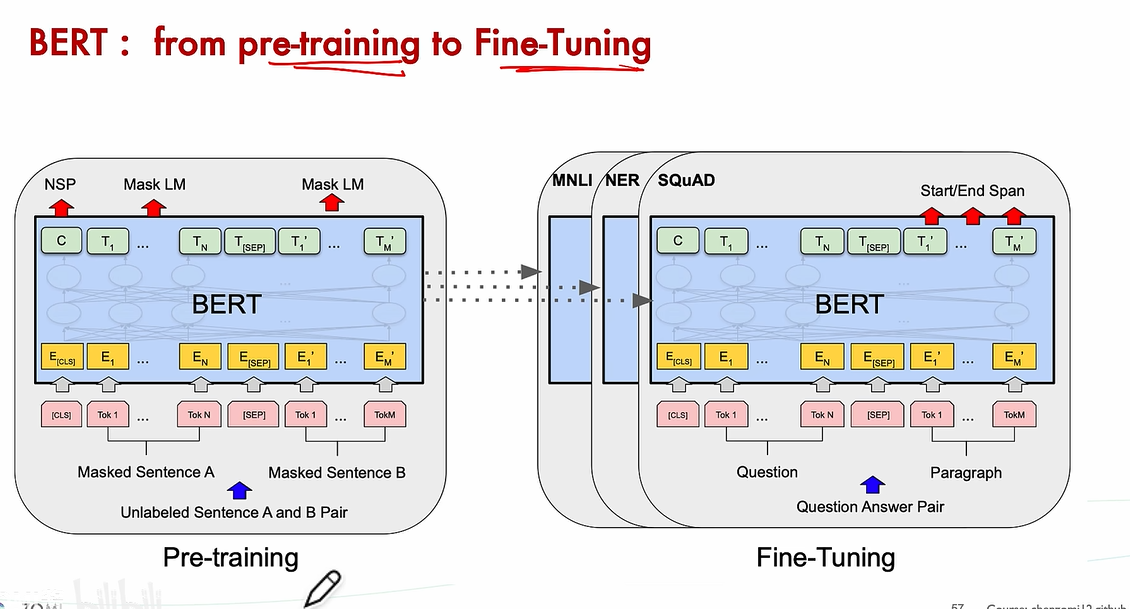

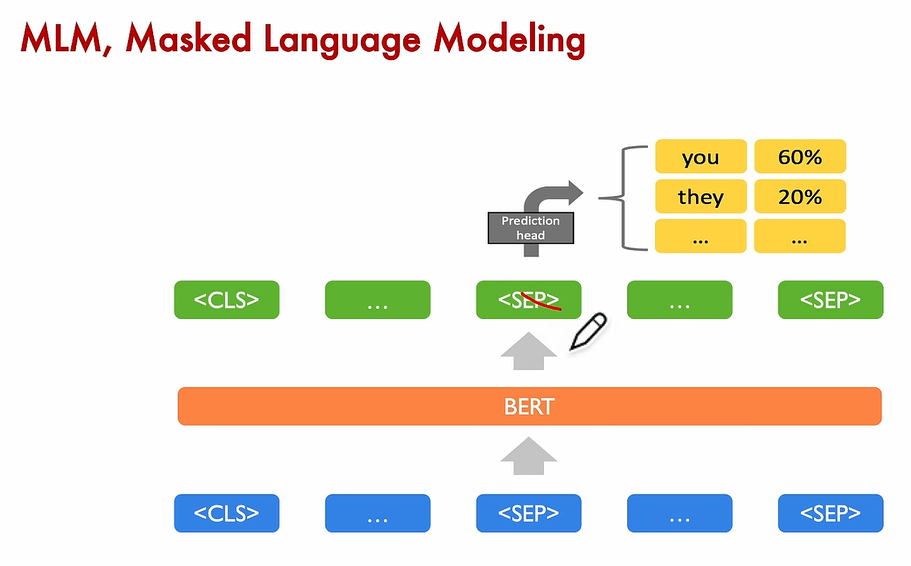

BERT oct 2018

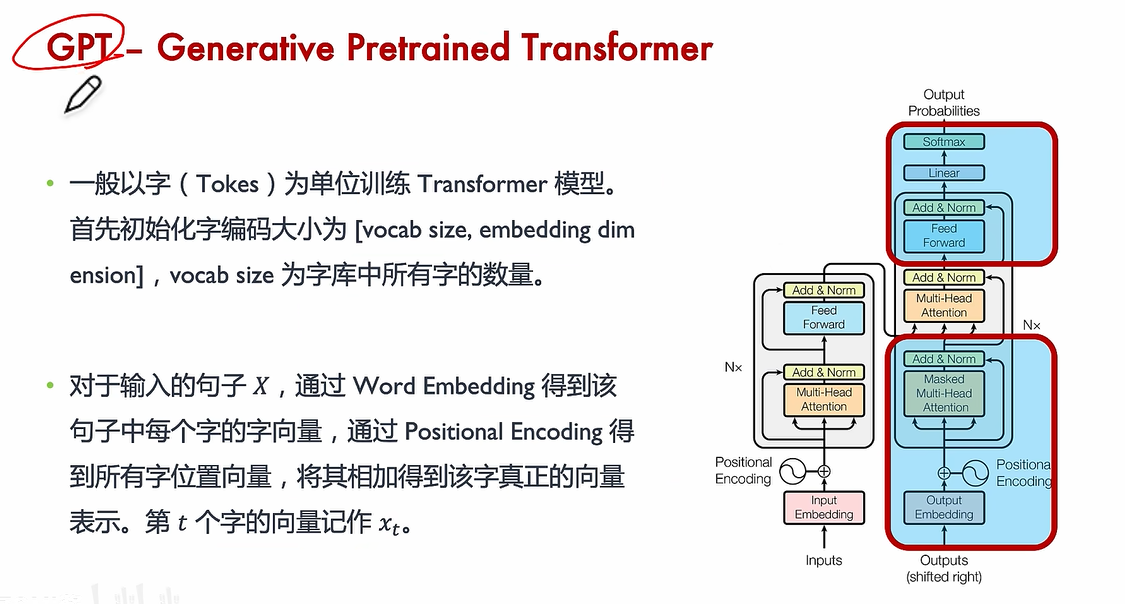

GPT-I Jun 2018

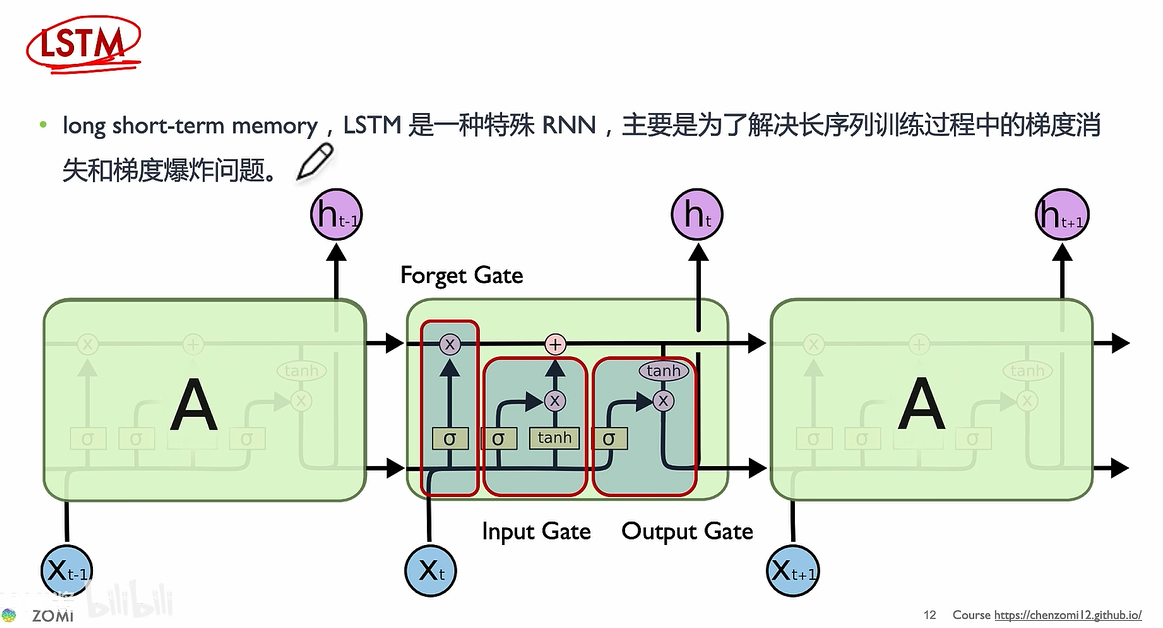

slow to train

short memory

vanishing gradient

slower to train

short memory

vanishing gradient

任务:机器翻译

input

to → hidden layer

inference

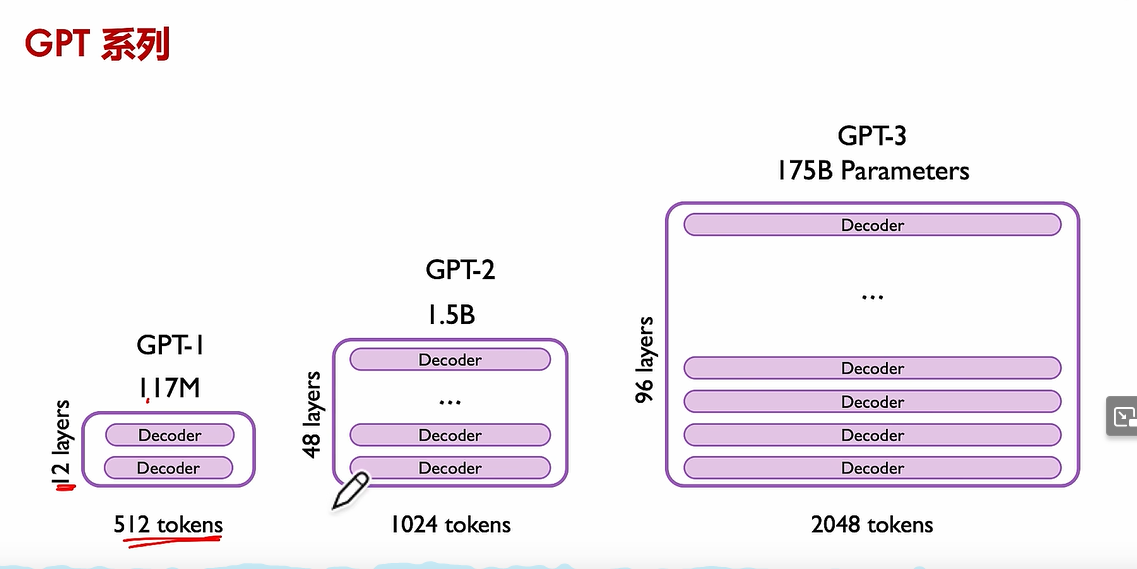

BERT oct 2018

GPT-I Jun 2018